In an era where data fuels innovation, the shift towards real-time processing is transforming how businesses operate. Uber’s recent launch of their streaming data lake system, IngestionNext, exemplifies this evolution, slashing latency and compute usage by a remarkable 25%. As we navigate the increasing demand for data-driven decisions, the urgency to ensure data freshness is becoming vital. By moving to a streaming-first architecture, organizations can foster better analytics and machine learning capabilities, dramatically enhancing operational efficiency. This article explores the intricate workings and benefits of Uber’s new approach, inviting you to uncover similar strategies addressed in our discussions on marketing transformation and system outages.

Understanding the Streaming Data Lake Revolution

A streaming data lake is designed to accommodate continuous data flows, enabling immediate analysis and response. By transitioning from scheduled batch processes to an event-driven model, businesses can expect increased data availability and reliability. As Kai Waehner, Uber’s global field CTO noted, this orientation emphasizes data freshness as a key dimension of quality.

The impact of such architectures is significant for various industries. Industries heavily reliant on data analytics, such as finance and retail, can capitalize on the rapid insights provided by a streaming data lake. By leveraging real-time data, companies can make informed decisions quickly, improving their competitive edge.

The Technical Marvel Behind IngestionNext

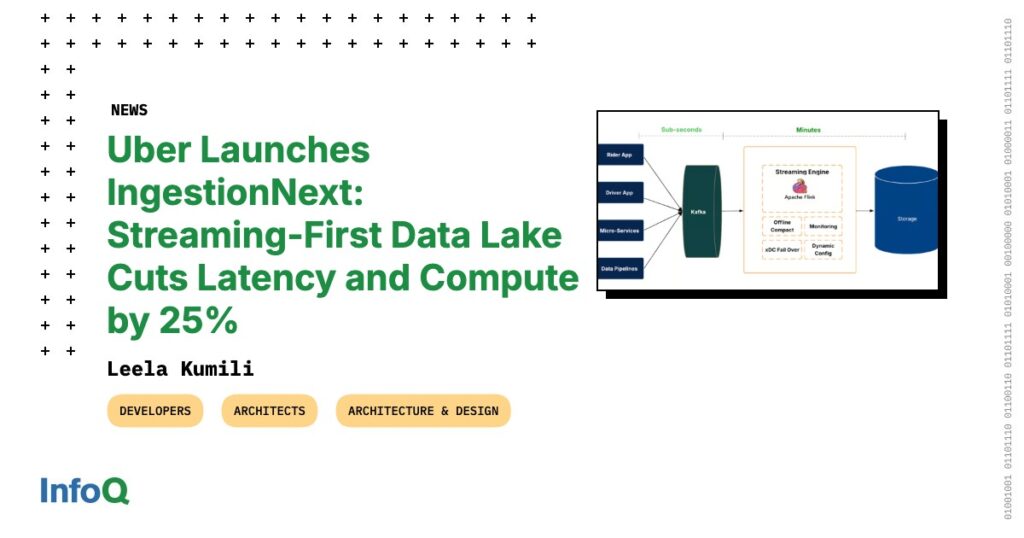

IngestionNext employs a cutting-edge architecture that processes event streams in real-time. Previously, Uber relied on Apache Spark to manage batch processing, which created latency issues that delayed data availability for subsequent analytics. The shift to a streaming-first system drastically reduces this latency from hours to mere minutes.

- Apache Kafka: This tool facilitates high-throughput data streams, enabling seamless integration into the data lake.

- Flink Jobs: These processes manage data transformations, committing them efficiently into the system.

Such architecture not only enhances performance but also supports high global data volumes, providing quicker access to analytical dashboards and experimentation platforms. These advances echo strategies discussed in our examination of AI in accounting which also prioritize real-time capabilities.

Overcoming Challenges in Streaming Data Processing

Transitioning to a streaming data lake model is not without its challenges. One major hurdle is managing the influx of small files that can hinder query performance and storage efficiency. To tackle this issue, Uber engineers developed row-group-level merging strategies for Parquet files, alongside compaction mechanisms to streamline file handling during continuous ingestion.

Examples of these innovations include:

- Checkpointing: Ensuring that data can be accurately captured at various processing stages.

- Recovery Strategies: These are critical for maintaining data integrity during technical failures.

By incorporating these solutions, Uber’s engineers have significantly improved the efficiency of their streaming data lake, allowing for a more robust analytics framework yet to be explored in various industries as indicated in our analyses of AI recruitment innovation.

Enhancing Resource Efficiency with IngestionNext

One of the standout advantages of IngestionNext is its ability to reduce the compute usage by approximately 25%. This shift from batch to continuous processing not only frees up computing resources but also optimizes operational costs. As a result, engineers can focus on enhancing data analytics and machine learning without worrying about overwhelming their processing capacities.

Key resource efficiency strategies include:

- Elimination of redundant batch jobs that hinder performance.

- Implementing scalable streaming jobs tailored to incoming data volumes.

This enables companies to maintain a fully integrated real-time data stack, where every aspect—from ingestion to analytics—functions seamlessly together.

The Future of Streaming Data Lakes

While IngestionNext improves the initial data speed, it is crucial to address potential latencies within downstream transformation processes. Moving forward, Uber aims to enhance its streaming capabilities across the entire analytics workflow. This evolution signifies a pivotal transformation in how businesses can approach their data operations.

As organizations adopt streaming data lakes, we anticipate a surge in real-time analytics across diverse sectors. The benefits observed are not confined to just one sector; various fields can adapt these lessons to innovate their analytics frameworks, as similarly discussed in our review of coding tools for developers.

To deepen this topic, check our detailed analyses on Apps & Software section